Implementing Reliable Data Analytics Solutions for Corrections

- By Maruthi Govindappa

- January 9, 2020

- Print This Article

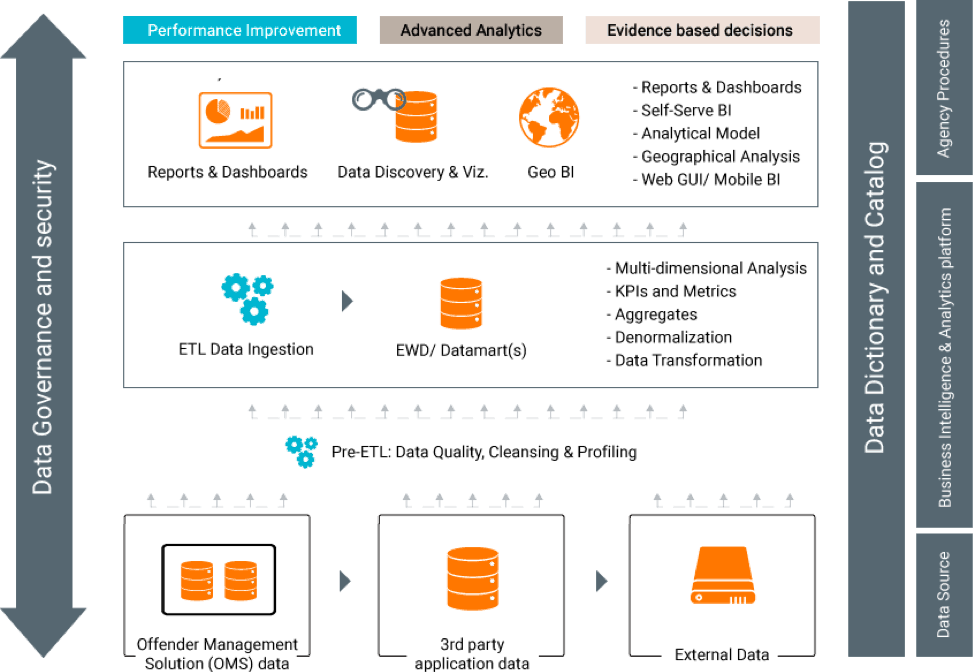

Enabling a Business Intelligence (BI) and Data Analytics solution is an essential component of the information strategy for correctional agencies. A data-driven, evidence-based, decision-making process requires a reliable (i.e. accurate, timely, and secured) BI and Data Analytics platform.

Following my previous post, Understanding Business Intelligence Ecosystem for Corrections, in which we touched on BI functional components, I am continuing the dialogue by discussing key criteria for a reliable BI and Data Analytics solution in corrections, and the requirements that will support a successful implementation.

A BI and Data Analytics platform provides greater value for correctional agencies when the following are met:

- Information extracted from the operational data is accurate

- Data is provided in a timely manner and is presented in its most recent state, along with historical changes

- Data is secured and available only to those who are authorized to access it

With the advent of information technology, BI and Analytics solutions have evolved to successfully meet and fulfill the above-mentioned key criteria.

As part of the Offender Management System (OMS) modernization, it’s recommended that correctional agencies consider the BI and Analytics solution as an integral part of their overall information strategy; this way, a complete lifecycle of the data is achieved: from data recording via OMS to data consumption via BI and Analytics platform.

Commonly emerging pain points, in discussing with correctional practitioners, are often related to the sharing and consumption of data:

- What are the best approaches to gain value from available and ample operational data

- How to achieve a reliable information system and/or a single source of truth

- How to minimize the effort of fetching the data and producing the reports requested by various agency stakeholders

- What is required to address data integrity and quality issues

I commonly use a ‘food supply chain in the restaurant industry’ analogy to describe the BI lifecycle : in a food supply chain the raw materials are harvested, they undergo quality control, and then they are processed, shipped and stored in a warehouse, from where, eventually, they would make their way into a restaurant’s inventory to be cooked and served. All these steps are compliant with food regulatory boards policies. In a similar fashion, raw transactional data is extracted (harvested) from operational data source system(s), data is checked for quality and transformed to analytical form (processing), integrated and stored in a warehouse from which reports and dashboards are delivered to end users. The entire data flow (from source to report and dashboards) is monitored by well-defined data governance policies and procedures.

Enterprise Business Intelligence and Data Analytics solutions are focused primarily on the following four key functional areas:

- Data Engineering

- Data Management

- Information Delivery & Analytics

- Data Governance

1. Data Engineering

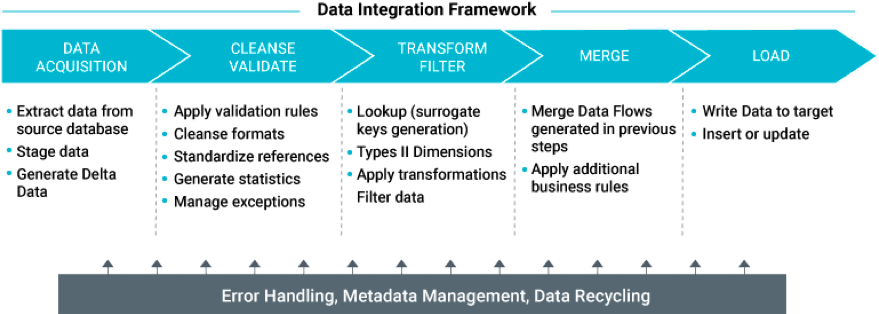

Data Engineering is a core function of the BI ecosystem; it plays a pivotal role in extracting data from operational data source system(s), transforming the raw transactional data into analytical information, and finally integrating it and storing it in a Datawarehouse. In the correctional domain, transactional data (such as offender attributes, locations, incidents, sentencing, and grievances) is extracted from the agency’s OMS, transformed to analytical data – which supports use cases – and stored in a Datawarehouse. Data engineering involves the following functions:

- Data Profiling: performed to understand the technical nature of data (such as data types, uniqueness, null values, etc.) and build the metadata and data integration specifications.

- Data Quality: to have accurate information from the BI/Datawarehouse, the source data must be accurate. (“Garbage data in results in garbage information out”). Therefore, it’s important to validate the data source and apply ‘exception handling rules’ before injecting it into the Datawarehouse. Data quality function enables the verification of data behavior in accordance with the agency’s policies and procedures.

- Data Integration: the largest part of the data engineering process, where raw transactional data is transformed into analytical data based on a pre-defined set of data transformation business rules. That data is uploaded to the Datawarehouse. Data Integration process is achieved by implementing ELT (Extract, Load, and Transform) / ETL (Extract, Transform, and Load) processes.

2. Data Management

The data required for analytics is processed and loaded into layers, a well-defined data architecture being essential for the successful implementation of a DW/BI solution. The BI Data architecture and modeling should be flexible enough to extend, accommodate, and address future business needs. For example, data architecture should be able to accommodate additional attributes (such as job profile, education, and program-related attributes, etc.) into the current data architecture without the need for an overhaul of the current Datawarehouse architecture. Data management should be able to build and manage historical data, enabling the agency to perform advanced analytics, such as trend analysis, predictions, etc.

A typical DW/BI architecture has:

- Operational Data Store (ODS) is used as a first data layer of the BI platform. It stages the operational data in its original format and keeps track of the history of transactional data by taking a periodic snapshot of the data source.

- Datawarehouse (DW) is the core of the DW/BI solution, where analytically transformed data (through data integration process) is denormalized and dimensionally stored. Data in Datawarehouse is granular, supporting multi-dimensional analysis.

- DataMart: provides aggregated information out of dimensional data in Datawarehouse. DataMart provides an abstract view of the Datawarehouse in accordance with the business functions and needs. DataMart is the gateway for the analytical information, rendering answers to all user queries.

Analytical data is queried and delivered as actional and insightful analytical information via the Information Delivery process. The analytical information is presented as Measures, Metrics, and Key Performance Indicators (KPI), and can be sliced and diced to perform multi-dimension data analysis. Information is delivered as reports and dashboards, and data is visually represented, enabling end users to perform interactive analyses.

3. Information Delivery and Analytics

The BI Information Delivery and Analytics involves having:

- Business Semantic Data Layer is applied on top of DataMart, and it presents data in a format that is business user friendly. The Business Semantic eases the complexities associated to build reports and dashboards, expediting the process.

- Self-service: Modern BI tools enable end users to perform self-service reporting and analytics, with no or minimal IT involvement. The BI tools are typically web based and intuitive. A well-defined Business Semantic Data Layer leads to a successful self-service BI solution and a centralized, secured information repository.

- Reports and Dashboards: BI information is typically consumed via interactive reports and dashboards. With modern BI tools, these interactive reports and dashboards are securely made available for consumption. The reports and dashboards can be scheduled for periodic automatic refresh and delivery, and they can also be exported to other commonly used formats such as Excel, PDFs.

4. Data Governance

With the rapid growth of the digital transformation, it becomes essential to apply a well-defined Data Governance strategy. A good Data Governance strategy ensures a trustable and reliable BI and Analytics platform solution. Data Governance is a combination of process and technology driven techniques, enabling a controlled and monitored delivery of information to end users. Data Governance by itself is an extensive topic, and in-depth study is required for a successful implementation. At a high-level, some of the techniques involved in Data Governance are:

- Data Integrity and Compliance: a key step in data engineering is data quality check. Greater attention is required when validating integrity of the source data; failing to do so could result in erroneous information sharing. Data integrity check needs to be periodically validated against agencies’ most up-to-date policies and procedures.

- Data Stewardship: is an essential component of the data governance. Data Stewardship related tasks are performed by Data Stewards, who are typically functional unit Subject Matter Experts (SME). Data Stewards monitor data for accordance and compliance to a pre-defined set of business rules, which are aligned to agencies policies and procedures. In the event of non-compliance, data stewards must take appropriate corrective action or follow-up with the relevant stakeholder and fix the data issues.

- Data Cataloging: as an agency’s information and BI landscape expands, the challenges for end users increase as they struggle to understand what information is available within and where it is housed. Data Cataloging extracts the metadata of the information available and curates it for end users; To relate it to the food chain supply analogy, Data Cataloging would be the equivalent of a menu.

- Audit: the BI and Analytics platform has to be equipped with a technology process that enables the auditing of the data management. In the DW/BI implementation process, the data flow from source to final point must be tracked and monitored in order to support the audit needs.

- Security: the BI and Analytics platform requires a well-defined security model at all stages of BI Data management and information delivery. Because of the sensitive nature of correctional agency operations, security is of paramount importance. Democratization of data has to be controlled and securely made available for authorized persons, based on their job functions.

I hope you found this post useful. Any feedback, comments, and suggested future topics are welcome at MGovindappa@abilis-solutions.com.

© 2020 Abilis Solutions. All rights reserved.

0 Comments

0 Comments